More than on one occasion, the Enterprise’s chief medical officer Dr. Leonard McCoy laments how barbaric surgeons of the XXth century must have been to actually cut patients during surgery. While many of Star Trek’s memorabilia are far from having become reality, medicine has made important strides forward in some aspects comparable to Doc. McCoy’s methods. A working tricorder might soon breach the realm of SciFi, and as far as modern surgery is concern we’re finally nearing the age where the hand directed scalpel will become obsolete.

Doctors today use the best technology at their disposal to perform minimally invasive surgery. Using lasers, finely tuned and precise robots and myriad of sensors, surgeons can perform a minimal amount of cuts to reach their objectives and thus avoid risks of displacing important tissue or blood vessels. Actually, it’s now possible for the best doctors at a hospital in the US to remotely perform surgery on a patient in India all via the internet, for instance.

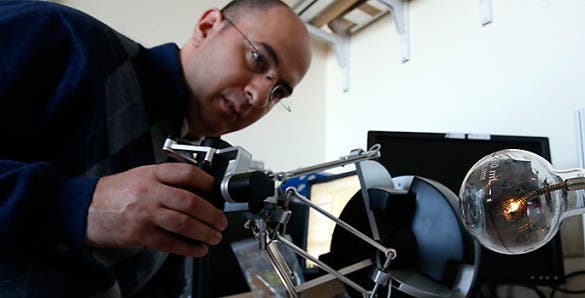

It’s truly remarkable, yet there are some tradeoffs to conventional surgery. For one, surgeons lose the type of awareness they have during open surgery like feeling pressure on organs and vital blood vessels. A team of researchers directed by Nabil Simaan, associate professor of mechanical engineering at Vanderbilt University, was recently awarded a $3.6 million grant part of the National Robotics Initiative to develop a new kind of machine intelligence that will assist doctors by increasing surgery awareness through the use of sensitive robots.

Surgeons compensate their lack of awareness though precise mapping of their cuts’ trajectory. This is achieved using tools like MRI, X-ray imaging and ultrasound to map the internal structure of the body before they operate, as well as miniaturized lights and cameras to provide them with visual images of the tissue immediately in front of surgical probes during operation.

Simaan and his team seek to take things to the next level by providing some kind of sensory feedback comparable to touch. Their plan is adding various kind of sensors and integrate the information these provide with pre-operative information like the maps mentioned before to produce dynamic, real time maps that precisely track the position of the robot probe and show how the tissue in its vicinity responds to its movements.

Surgery of the future

Adding pressure sensors to robot probes will provide real time information on how much force the probe is exerting against the tissue surrounding it. Such sensor data can also feed into computer simulations that predict how various body parts shift in response to the probe’s movement. Also, the team will generate models that estimate locations of hidden anatomical features such as arteries and tumors .

At the heart of this initiative is a technique called Simultaneous Localization and Mapping that allows mobile robots to navigate in unexplored areas. Actually, with all of these in place some surgical procedures will become semi-automatic like tying off a suture, resecting a tumor or ablating tissue. These maps will form the foundation of the Complementary Situation Awareness (CSA) framework.

“We will design the robot to be aware of what it is touching and then use this information to assist the surgeon in carrying out surgical tasks safely,” Simaan said.

The designs Simaan and his team will produce in their project might have applications that move beyond medicine. The researchers envision their CSA framework being used to disarm a bomb or by a human user operating a robotic excavator to dig out the foundation of a new building without damaging the underground pipes or by rescue robots searching deep tunnels for injured miners.

“In the past we have used robots to augment specific manipulative skills,” said Simaan. “This project will be a major change because the robots will become partners not only in manipulation but in sensory information gathering and interpretation, creation of a sense of robot awareness and in using this robot awareness to complement the user’s own awareness of the task and the environment”