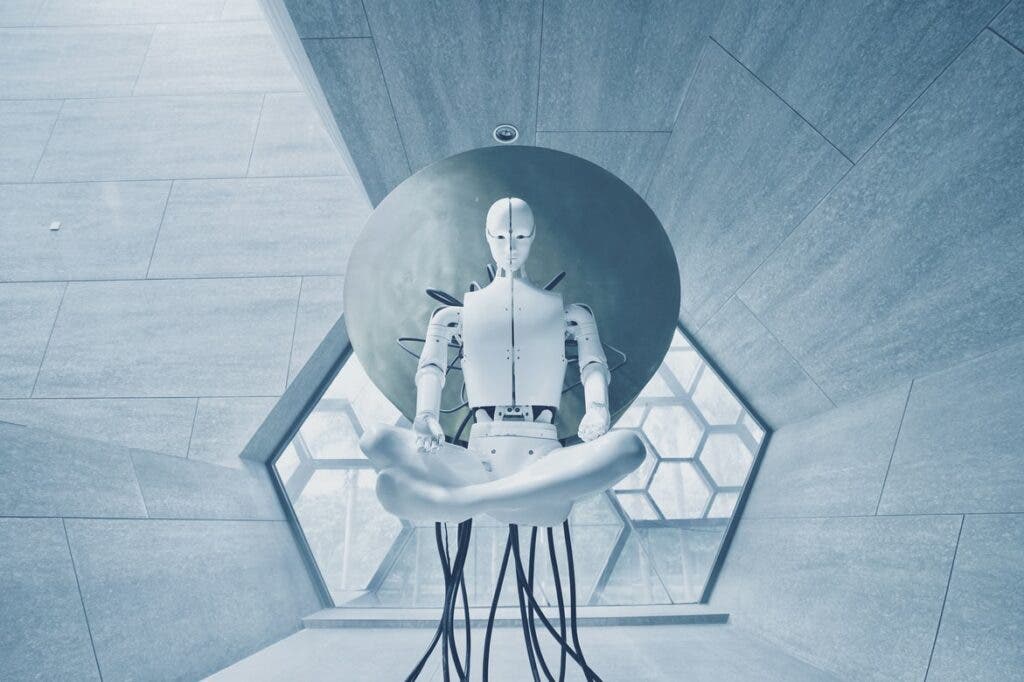

Researchers in China claim they have developed the world’s first artificial intelligence (AI) capable of analyzing case files and charging people with crimes. The Orwellian device can already identify ‘dissent’ against the state and suggest sentences for supposed criminals, removing people from the prosecution process and human oversight.

The program can file a charge based on a written case description with 97% accuracy, states the Chinese Academy of Science team who developed the system, but many see this as a device that can be potentially used for nefarious purposes.

The cases the robot can prosecute include common crimes which include things like “subversion of the political power of the State” and “sabotaging national unity” — vague crimes often charged against dissidents and which the UN believes can be used against the ‘communication of thoughts or ideas’. The fact that an AI can prosecute a variance in views or unorthodox beliefs is causing concern about what these terms constitute and whether the state could use the system to expand their legal definition – with no one to take responsibility for the burgeoning civil limitations.

Removing the human decision process from the legal system

The robot was built and tested in the Shanghai Pudong People’s Procuratorate, the country’s most extensive and busiest district prosecution office – where the team will expand it to include more crimes and a higher caseload.

According to Professor Shi Yong, director at the Chinese Academy of Sciences’ big data and knowledge management laboratory, the technology could reduce prosecutors’ daily workload, allowing them to focus on more challenging tasks. Here he almost hints at an automated doctrine with his comment: “The system can replace prosecutors in the decision-making process to a certain extent.”

The South China Morning Post reported that the algorithm, a unique mathematical formula that makes up AI, can run on a standard desktop computer and press charges based on 1,000 illegal or discordant traits plucked from the human-generated case description.

Engineers honed the machine using more than 17,000 cases from 2015 to 2020. Presently, it can identify and press charges for Shanghai’s eight most commonly committed crimes: credit card fraud, gambling, dangerous driving, theft, fraud, intentional injury, obstructing official duties, and ‘picking quarrels and provoking trouble’ – a term used to mollify nonconformists. But, for now, the system has no role in the decision-making process and does not suggest the length of prison sentences.

Despite this, there are already fears it will fail to keep up with changing social standards and could be used to subdue progressive ‘freethinkers.’

Artificial intelligence in law enforcement is becoming widespread

AI technology already exists in law enforcement, but this would be the first time it presses charges.

For instance, image recognition and digital forensics help with caseloads in Germany; at the same time, China has used a tool known as System 206 since 2016 to evaluate the evidence, conditions for an arrest, and if the suspect poses any danger to the public.

Forging ahead in the global AI race, Chinese authorities launched the country’s first cyber court in 2017, allowing parties in cyber-related lawsuits such as e-commerce to appear via video in front of AI-based judges. While the idea is to help the system deal with larger caseloads, human judges still monitor every step before making a ruling.

Because making such decisions would require a machine to identify and translate complex, human language into a format that a computer could understand. A program known as natural language processing (NLP) is needed to analyze text, recordings, or images created or uploaded by humans and requires supercomputers that prosecutors cannot access.

In a step towards an NLP system on a basic computer, the team plans to upgrade their machine to recognize less common crimes and file multiple charges against a single suspect.

AI is also being used to monitor government officials

In the meantime, China is making aggressive use of AI in nearly every government sector in an attempt to improve efficiency, reduce corruption and strengthen control. According to the researchers, some cities have used machines to monitor government employees’ social circles and activities to detect corruption; there is no mention of whether the state uses the system to pick up ‘quarrelsome’ traits in the officials or their peers.

Meanwhile, courts in the country have also been using this technology to help judges process legal documents and make decisions such as accepting or rejecting an appeal. And most Chinese prisons have now adopted AI to track prisoners’ physical and mental status, intending to reduce violence. This comes at a time when authorities are using torture, sexual assault, and hard labor to subjugate prisoners, with many ‘re-education’ camps for religious and ethnic minorities now operating outside legal jurisdiction.

One prosecutor in Guangzhou, who asked not to be named, voiced concerns about the new computerized judge and jury: “The accuracy of 97 percent may be high from a technological point of view, but there will always be a chance of a mistake. Who will take responsibility when it happens? The prosecutor, the machine, or the designer of the algorithm?” He added that many human prosecutors will not want computers interfering in their work, adding: “AI may help detect a mistake, but it cannot replace humans in making a decision.”

To sum up, it will be interesting to see what happens when extenuating circumstances are removed from a legal system and replaced with a one-thought-fits-all program.

Was this helpful?