Many important problems related to how Earth’s climate works, as well as how we can produce and use energy more efficiently, require extraordinarily complex calculations to address them. Simulating the nuanced effects of ever-shifting clouds on climate fits into this category, as does evaluating a vast array of materials to optimize photovoltaic production or energy storage.

Such problems are becoming increasingly difficult to solve with classical computers running programs based on binary codes, especially as the scale of the necessary computations grows. For this reason, many problems are tackled using models that rely on approximations (e.g., parameterizations of climate-influencing processes like cloud formation or climate-influenced phenomena like hurricanes) to reduce computational expense. The trade-off for this capability, however, is that these models are not as precise as we’d like them to be. And even with these approximations, supercomputers are approaching the limits of conventional computational power. So where can we turn to keep making progress on complex, but pressing, scientific problems?

Recently, researchers have identified potential high-impact applications of quantum computing technologies, focusing, for example, on their use in research related to climate change, renewable energy [Giani and Eldredge, 2021], and the development of optimized energy generation and storage solutions [Paudel et al., 2022].

Basics of Quantum Computing

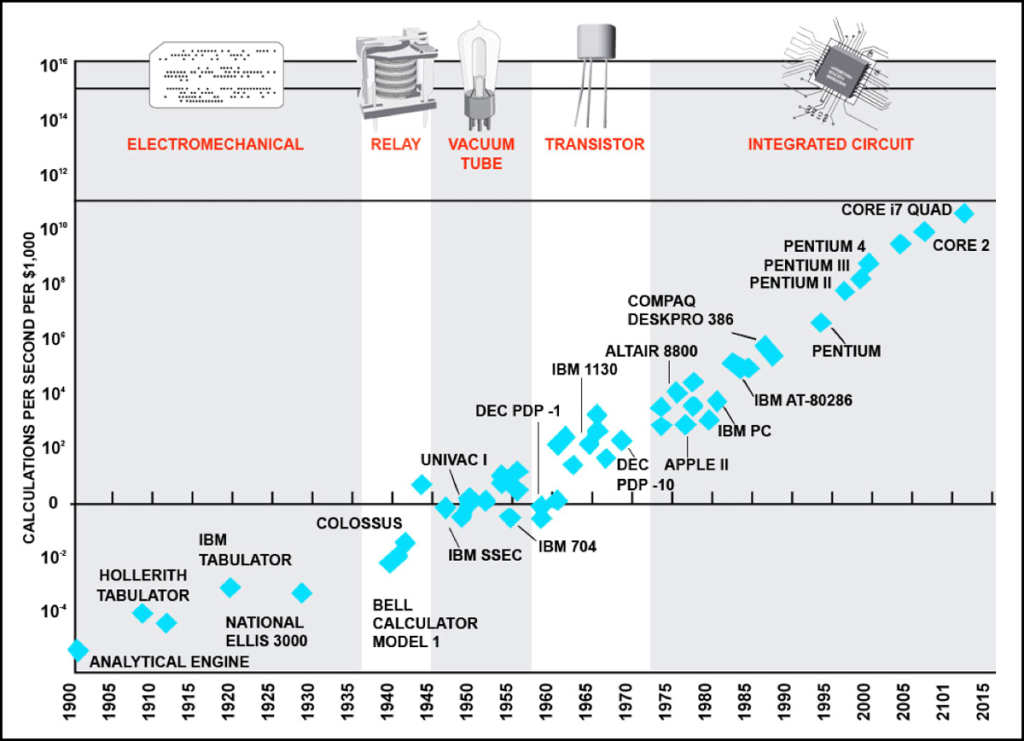

Since the 1960s, semiconductor transistors, the building blocks of logic gates and modern computers, have become smaller and faster, enabling incredible increases in computer power. In 1965, Gordon Moore observed that the sizes of new transistors that were being developed were shrinking so fast that with each passing year, twice as many could fit onto a microchip [Moore, 1965]. He also forecasted that this trend—later revised to suggest a doubling every 2 years—would continue for some time into the future (Figure 1). However, modern computers are now meeting the limit of this expansion as new transistors are so small (roughly 5 nanometers) that undesirable quantum effects (e.g., tunneling), which alter the predictability of semiconductor behavior, are becoming unavoidable.

Quantum computing is an approach to computation that takes advantage of the quantum properties of materials and particles that dominate at small scales. In classical binary computing, the bit is the basic unit of information, and each bit can be coded as either of two states: 0 or 1. In contrast, quantum bits, or qubits, the basic unit of information for quantum computers, can be in multiple states between 0 and 1 at the same time, a property called superposition (Figure 2).

Once measured, a qubit “collapses” to a state of 0 or 1—much like how Schrödinger’s famous cat, once observed, is found either alive or not rather than existing as a superposition of both possibilities. Regardless of any later measurement, though, the advantage of superposition is that more states are accessible for computation.

Two classical bits can occupy 22 = 4 different configurations (i.e., 00, 11, 01, or 10), but only one of those configurations at a time can be used for computation. Two qubits, on the other hand, can occur in combinations of these four configurations at the same time, and all of them can be used simultaneously to enable far more rapid parallel calculations. This advantage grows exponentially with more qubits as the number of available states increases—for n qubits, 2n states are available for computations. With 30 qubits, for example, more than 1 billion configurations are accessible.

A Variety of Technologies

A qubit is an abstract mathematical model for information that must be physically implemented in hardware. Many different hardware approaches for creating qubits and pursuing quantum computing are emerging. Companies including IBM, Google, and D-Wave are using superconductors to create qubits and microwave pulses to manipulate the information they hold, whereas IonQ and Honeywell are developing an approach using trapped ions suspended in a vacuum and manipulated with lasers. Intel is building qubits with silicon quantum dots in which the quantum state depends on the spin of an electron in silicon and is manipulating these qubits with microwaves. Elsewhere, researchers are busy working out how to develop photonic quantum computers using light beams and optical circuits [Andersen, 2021].

Currently, each of these approaches has advantages and disadvantages in terms of their coherence time (how long information can be kept in the qubits), error rate, difficulty to manipulate, and cost. And with all of them, the fragility of quantum states and the extreme precision needed in their fabrication pose challenges to building a large-scale, fault-tolerant quantum computer—that is, one that can execute programs and produce accurate results despite defects or failures in some hardware or software components. A common challenge for many of the technologies, for example, is the requirement that the qubits be maintained at temperatures close to absolute zero to prevent quantum information from decaying (although photonic technologies do not require such low temperatures).

Verifying and benchmarking the performance of quantum computers is challenging for many reasons. For example, the wide variety of quantum hardware being developed and the different rates at which these technologies will develop make it difficult for a “snapshot” assessment at a given point in time to predict how these technologies will perform. Also, the strength of each hardware implementation depends greatly on how it is applied, and comparisons with classical computing approaches are not obvious because classical algorithms are also advancing. Implementing communication and control measures to ensure that operations occur accurately and that information can be moved from place to place is also challenging [Franklin and Chong, 2004].

Finding the Right Problems

Building quantum computers is monumentally difficult in and of itself, but we must do more than build them to realize their benefits. Quantum computers are not universally better at all tasks than conventional computers—they are merely different, and that difference can, in some cases, be leveraged to do amazing things. And as with conventional computing, quantum computing requires careful attention to the design of software and algorithms for specific purposes and problems. As a result, a key challenge for quantum computing researchers is to identify promising applications in which the important problems are well matched to the strengths of quantum computers and for which software and algorithms can be crafted to capitalize on these strengths (Table 1).

Table 1. Examples of How Quantum Computing Could Help to Address Climate Change- and Energy-Related Challenges

| Category | Challenge | Potential Benefits of Quantum Computing |

| Climate modeling and weather forecasting | Meeting computational needs as the complexity and resolution of simulation and forecasting models grow | Greater capability to solve fluid dynamics–based simulations could facilitate model improvements, allowing clearer understanding of likely future conditions and improving mitigation and adaptation planning. |

| Grid safety and resilience | Ensuring power generation facilities are robust and reliable in the future | Enhanced weather and climate models could allow for safer siting of infrastructure, and quantum optimization can be applied to improve the design of new resources like wind farms. |

| Grid management | Scheduling and dispatching resources to match supply and demand, especially as the number and distribution of generators (e.g., wind and solar) grow | Quantum optimization could help create cost-effective management solutions and could lower consumer prices by improving operating conditions (e.g., by solving alternating current optimal power flow equations). |

| Quantum chemistry | Evaluating molecular-scale properties and processes of a vast array of materials to foster technology innovation | Quantum computing could accelerate discovery and development of new energy production (e.g., photovoltaic) and storage (e.g., battery) technologies, as well as improved strategies for climate change mitigation (e.g., carbon capture). |

In particular, well-suited applications will make use of quantum computers’ abilities to explore large, structured spaces of potential solutions. Thus, model optimization problems offer some of the most fertile ground for quantum computing applications. Algorithms for solving optimization problems, which involve finding best fit values for multiple interacting variables in a solution space, can get “stuck” on a localized solution, or optimum, whereas if the algorithm had kept searching, it might have found an even better solution (e.g., a better fit to observed data or a more plausible what-if scenario). Quantum computing can take advantage of “tunneling” effects to break out of local optima and keep searching.

Optimization applications have led to significant interest in quantum computing as a means of training machine learning models, which in turn open up a host of other applications. Likewise, quantum computers may be naturally suited to solve certain linear algebra problems such as stress analysis and fluid flow, which are also ubiquitous in science and engineering. In general, because the advantages of quantum computing often emerge as the scale of required computations grows, applications for which current computers are struggling to handle the large array of calculations and inputs involved are likely where the biggest value of quantum computing lies. Many examples of such applications are found in Earth science, specifically with respect to studying climate and weather and to improving our use of energy resources.

Benefits in Climate and Energy Applications

Quantum computing may be especially useful in applications involving fluid dynamics–based problems and simulations, such as those in climate modeling and weather forecasting. Approaches have been developed to apply quantum computing to the nonlinear differential equations that are key for working on fluid dynamics problems [Lubasch et al., 2020].

Improvements in both near-term weather forecasting and longer-term climate predictions achieved with quantum computing could benefit the resilience and reliability of energy systems. Weather forecasts are increasingly important for managing variable wind and solar power sources, and clearer predictions of climate could allow for better siting of power generation infrastructure away from areas expected to be affected in the future by, for example, increased flooding or wildfires. Furthermore, quantum optimization techniques can be repurposed to design and operate generating facilities like wind farms more efficiently, for instance, through the optimization of turbine layouts to minimize wake effects and maximize energy production.

Another broad category of interest is the simulation of fundamental chemistry processes, which are innately quantum in nature because of the atomic scale at which the physics involved occurs. Chemistry simulations enabled by quantum computers could help us understand large complex molecules and allow for better design of chemicals and processes relevant to the energy industry. Such molecules could, for example, aid in developing processes for carbon capture or the electrolysis of water or in designing photovoltaic materials [Almosni et al., 2018] or energy storage technology [Rice et al., 2021].

There is also major interest in using quantum computing to improve energy management, specifically the scheduling and dispatch of generation resources on the power grid. One question of interest is whether quantum computers can find optimal solutions to alternating current optimal power flow (ACOPF) equations. These equations help to determine the best operating levels for electric power plants that allow them to meet electricity demands while minimizing operating costs. Solving ACOPF problems is becoming increasingly difficult as the number of small, variable power generators on the grid—particularly wind and solar generators—increases and presents a scaling issue. Even slightly more optimized ACOPF solutions could potentially save money for consumers and producers and help mitigate climate change by reducing energy waste.

Putting Quantum Computing to Use

The deployment of large-scale, fault-tolerant quantum computers is likely still a few years in the future. But while we await that milestone, preparations can be made to develop software and strategies for using these computers to address important problems related to climate and energy.

The quantum computing research community has made significant progress on algorithm development, but collaboration between this community and climate and Earth science researchers with relevant domain knowledge is needed to ensure that development efforts meet the needs of real-world applications. Researchers from both communities must exchange ideas to get a clearer picture of what challenges remain in both fields. Meetings like the IEEE Quantum Week workshop on Quantum Computing Opportunities in Renewable Energy and Climate Change and the Q4Climate workshop represent early efforts to bring together these two communities.

Other sectors have important roles to play as well. Governments around the world are providing support by investing in quantum information science [e.g., Giani and Eldredge, 2021], which is crucial for enabling further development. And industry will fill the gaps from pure research to technology development to application in the real world. With cooperation among all these sectors, the coming quantum computing revolution can be brought to bear on the critical climate and energy challenges we face.

References

Almosni, S., et al. (2018), Material challenges for solar cells in the twenty-first century: Directions in emerging technologies, Sci. Technol. Adv. Mater., 19(1), 336–369, https://doi.org/10.1080/14686996.2018.1433439.

Andersen, U. L. (2021), Photonic chip brings optical quantum computers a step closer, Nature, 591, 40–41, https://doi.org/10.1038/d41586-021-00488-z.

Franklin, D., and F. T. Chong (2004), Challenges in reliable quantum computing, in Nano, Quantum and Molecular Computing, pp. 247–266, Springer, New York, https://doi.org/10.1007/1-4020-8068-9_8.

Giani, A., and Z. Eldredge (2021), Quantum computing opportunities in renewable energy, SN Comput. Sci., 2, 393, https://doi.org/10.1007/s42979-021-00786-3.

Lubasch, M., et al. (2020), Variational quantum algorithms for nonlinear problems, Phys. Rev. A, 101(1), 010301(R), https://doi.org/10.1103/PhysRevA.101.010301.

Moore, G. (1965), Cramming more components onto integrated circuits, Electronics, 38(8), 114, newsroom.intel.com/wp-content/uploads/sites/11/2018/05/moores-law-electronics.pdf.

Paudel, H. P., et al. (2022), Quantum computing and simulations for energy applications: Review and perspective, ACS Eng. Au, 2(3) 151–196, https://doi.org/10.1021/acsengineeringau.1c00033.

Rice, J. E., et al. (2021), Quantum computation of dominant products in lithium–sulfur batteries, J. Chem. Phys., 154, 134115, https://doi.org/10.1063/5.0044068.

Author Information

Annarita Giani, General Electric Research, Niskayuna, N.Y.; and Zachary Goff-Eldredge, U.S. Department of Energy Solar Energy Technologies Office, Washington, D.C.

This article originally appeared in Eos Magazine and was republished under a CC BY-NC-ND 3.0 license.

Was this helpful?