Mind-controlled devices, also known as brain-computer interfaces, have evolved a lot in the past few years alone, offering countless people who are paralyzed or missing limbs the chance of living a normal life. Non-afflicted individuals may profit from brain-computer interfaces as well, either for fun (why bother with gamepads or even Kinect when you can control everything in a video game just by thinking about it?) or other applications (we’ve written a while ago about a military project in which soldiers could control an avatar robot with their thoughts; crazy stuff!). Just how easy is using these interfaces though?

A study recently conducted by scientists at University of Washington analyzed the brain activity of seven people with severe epilepsy, which had their skulls drilled and fitted with thin sheets of electrodes directly onto their brains. This served a double purpose: physicians could scan for epilepsy signals, while a bioengineering team studied which parts of the brain were used as the patients learned to move a cursor using their thoughts alone. The findings took everyone by surprise.

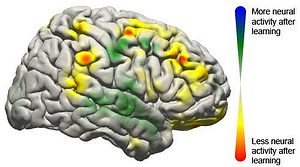

Electrodes on their brains picked up the signals directing the cursor to move, sending them to an amplifier and then a laptop to be analyzed. Within 40 milliseconds, the computer calculated the intentions transmitted through the signal and updated the movement of the cursor on the screen. Apparently, when the brain uses interface technology it behaves much like it does when completing simple motor skills such as kicking a ball, typing or waving a hand. What’s most impressive is that it only takes 10 minutes for this automation to kick in, after the researchers observed activity went from being centered on the prefrontal cortex, which is associated with learning new skills, to areas seen during more automatic functions.

“What we’re seeing is that practice makes perfect with these tasks,” Rajesh Rao, a UW professor of computer science and engineering and a senior researcher involved in the study, said in a school news release. “There’s a lot of engagement of the brain’s cognitive resources at the very beginning, but as you get better at the task, those resources aren’t needed anymore and the brain is freed up.”

This is the first study that clearly maps the neurological signals throughout the brain.

“We now have a larger-scale view of what’s happening in the brain of a subject as he or she is learning a task,” Rao said. “The surprising result is that even though only a very localized population of cells is used in the brain-computer interface, the brain recruits many other areas that aren’t directly involved to get the job done.”

Brain-computer interfaces are definitely getting traction, but let’s face it, the technological limitations are rather cumbersome. For one, the procedure is extremely invasive, as in extreme! You need your skull drilled, and have electrodes implanted, for the interface to become effective. Sure, we’ve seen some work perform pretty well using only electrodes temporarily attached to the scalp, but the signal processing is too weak for the interface to become reliable, especially for those in need of smart prosthetic. Now, armed with a better understanding of how the brain reacts, scientists might come up with solutions that don’t entail drilling holes through skulls.

“This is one push as to how we can improve the devices and make them more useful to people,” said Jeremiah Wander, a doctoral student in bioengineering. “If we have an understanding of how someone learns to use these devices, we can build them to respond accordingly.”

The findings were reported in a paper published in the journal PNAS.

Was this helpful?