Two archers are competing: one is accurate, the other is precise. Who do you bet on? Think carefully, who has the best chance to score better?

The answer to that question is not as simple as it sounds. For starters, the two don’t mean the same thing, as you may be inclined to think. Accuracy and precision mean two different things, and you can be precise without being accurate, or vice versa.

Bull’s eye

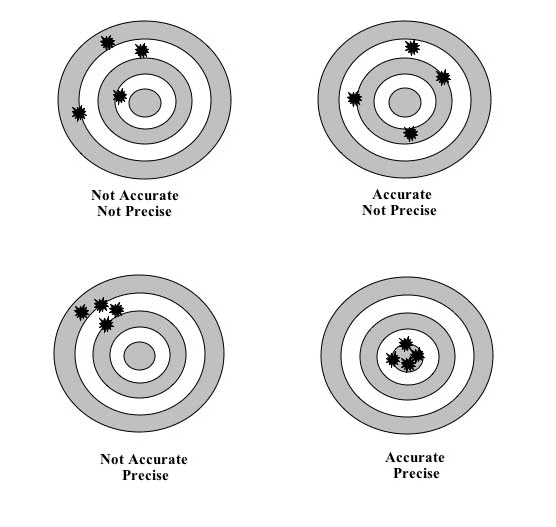

Perhaps the easiest way to get an intuitive idea about what the two terms mean is through an image (as below). In our archer example, if the arrows are close to each other but not close to the bull’s eye, they are precise but not accurate. If they are fairly close to the center but not to each other, they are accurate but not precise. If they are close to each other and close to the center, they are both accurate and precise.

This isn’t a very accurate definition, to be fair. It’s more of an example. So how would we define the difference between precision and accuracy?

Let’s move away from our archers a bit and look at things a bit more scientifically. If you’re measuring something and you’re having errors, you may want to see what type of errors you have. So, another way of looking at accuracy and precision is as ways of defining what type of errors you have. Alternatively, you can think of precision as reproducibility, and of accuracy as trueness.

Accuracy refers to the degree of closeness to a measurement to the true value — how far your measurement is from the true value. Precision refers to how close repeated measurements are to each other. So if all your values are close to each other, that doesn’t necessarily mean they’re right, just that the measurements are consistent.

Accuracy errors typically come from systematic errors — as in, your measuring device/method isn’t good enough to detect whatever you aim to detect accurately. Precision errors are more often associated with noise, randomness, electronic errors, temperature, or other factors that affect measurements. Accuracy is the measurement of conformity, precision is the measure of statistical variability.

The ISO (International Organization for Standardization) works on an even stricter definition. In the view of the ISO, accuracy refers to results that are both true (or close to true) and consistent. The ISO definition demands that an accurate measurement has no systematic error and no random error. So basically, to fit the ISO definition for accuracy, you need to be both accurate and precise — but this definition is rarely used as such in practice.

So returning to our archery example, you’d want to pick the accurate archer — it matters less to you if he errs a bit to the left or to the right of the center, as long as he is close by. The precise archer would shoot all his arrows close to each other, but it’s not clear where on the board the arrows would land.

Obviously, the best quality archers (and similarly, the best quality scientific data) are both accurate and precise. Precision and accuracy are independent of each other, so you can have precision but not accuracy and vice versa, and it happens quite a lot that different scientific instruments don’t have identical accuracy and precision.

Sometimes, this matters quite a lot.

Where the difference between precision and accuracy matters

In day-to-day speech, the two words are often used interchangeably, and unless you mean anything specific, that’s fine. But if you’re talking about something more scientific, or something that has to do with data, then you’d better be more precise (or is it accurate?).

Problems related to accuracy and precision affect many of us daily in at least one way: positioning.

GPS and other positioning systems have an error. They can be imprecise or inaccurate — or both. Although both the accuracy and the precision of satellite-based positioning have improved dramatically in recent years, it’s still not perfect (the errors are typically in the range of a couple of meters). Because GPS receivers filter out a lot of noise and signal biases, their precision tends to be quite good, but their accuracy still leaves room for improvement.

Of course, plenty of the tools we use regularly can have issues related to accuracy or precision. Weighing scales, for instance, can ruin your day by showing a bit of extra weight for no apparent reason. Weather predictions also suffer from precision and accuracy issues.

Still, the two concepts are especially important in science. Research labs have to constantly check and calibrate their instruments for both precision and accuracy. Instruments will typically have a specified value for accuracy as well as one for precision.

It’s not exactly rocket science, and it’s not exactly something everyone needs on a day-to-day basis, but this could be useful to know.

Was this helpful?