Since the 1990s, biologists have witnessed a sudden demise of amphibian species. So far, hundreds of species have become extinct after becoming plagued by a wretched fungus. From mountain lakes to meadow puddles, no matter the continent, frogs are dying everywhere – a demise that might spell an ecological meltdown. There may still be hope yet, according to a recent study which found frogs can learn to fight and adapt to the killer fungus under certain conditions. If needed, the findings provide a solid basis for potential future efforts to interfere in the pandemic and save the world’s amphibians.

A killer fungus

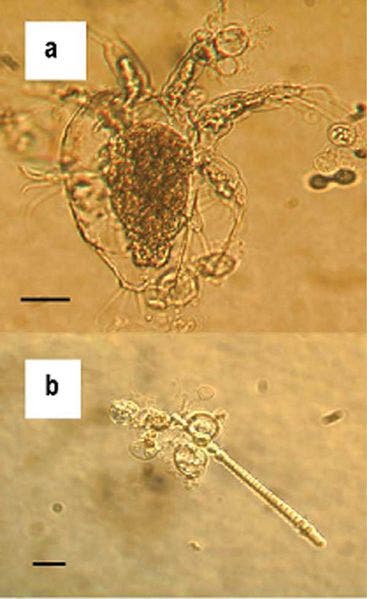

Batrachochytrium dendrobatidis, Bd for short, is the fungus in question. Its spores land on the skin of amphibians, burrowing down deeper under the skin where it releases a poisonous toxin that slowly kills the host by paralyzing the immune cells. Since the fungus and its killing behaviour were first observed, scientists hypothesize the infections may have driven hundreds of species extinct. The death toll could increase by a couple of orders of magnitude if the phenomenon is left unchecked and a critical point is reached.

Amphibians are a vital part of the ecological food chain. They feed on mosquitoes and all sorts of insects, and their own turn make up an important food source for birds and other small animals. For instance, in the forests of the northeastern United States, the biomass of amphibians outweighs birds, mammals and all other vertebrates. If Bd is left unchecked, however, the world’s ecosystems, especially the fragile ones, could become seriously threatened.

The past two decades has seen a lot of studies on Bd published, which have significantly helped solve the problem by broadening our understanding of the fungus – how it infects its host, how it kills it, how it multiplies, genetics and so on. We’ve also learned that some amphibian populations have learned to fight Bd and even resurface after being nearly wiped out.

Will those who survive be the key?

Jason R. Rohr, an expert on the fungus at the University of South Florida, and colleagues believe these recovering amphibians produce a much stronger immune system in response to the Bd infection. To test this theory, several Cuban tree frogs were infected with Bd spores then inserted in a heated chamber where they stayed at 86 degrees for 10 days. Heat kills the fungus, and the researchers repeated the procedure three times.

Exposing the frogs to Bd this many times significantly improved their immune response. They produced more immune cells, and the fungus produced fewer spores. Thus, exposed frogs had a much better chance of surviving an infection than a novice. Moreover, the immune response became stronger after each exposure.

Dr. Rohr and team also found that the frogs could avoid infections altogether by staying away from the fungus. In the experiment that proved this fact, oak toads were inserted in a double sided chamber; one side was contaminated with fungal spores, while the other was fungus-free. They found that toads that had never been exposed to the fungus would explore both sides of the chamber, becoming infected along the way. The toads that were previously exposed to the infection (then treated with heat as in the first experiment) tended to avoid the infected side of the chamber.

These experiments show that it is possible for amphibian populations to beat the fungus. It’s highly plausible that some infected specimens in the wild had managed to beat the infection by taking refuge in a warm spot. After that, these survivors stayed away from future infections and passed this information to offspring.

Resurfaced frog populations gives credence to this idea, but let’s not get ahead of ourselves too much. The odds are still in the favor of the Bd fungus, but is there anything we can do? Dr. Rohr says spraying populations with dead Bd spores might improve their chances of survival when hit by Bd infections. Karen R. Lips of the University of Maryland is skeptical of this particular solution, citing the fact that wild amphibians are already exposed to both live and dead spores. “We live in a Bd world,” she said. Even so, “any evidence that some amphibians are surviving with disease is good news,” she said.

The findings appeared in the journal Nature.

Was this helpful?