Researchers at Carnegie Mellon University have repurposed a common piece of tech found inn virtually every household into a tracking technology.

They used radio signals from WiFi routers to detect and track the three-dimensional shape and movements of human bodies in a room. They didn’t have to use any cameras or expensive LiDAR hardware.

“We believe that WiFi signals can serve as a ubiquitous substitute for RGB images for human sensing in certain instances. Illumination and occlusion have little effect on WiFi-based solutions used for interior monitoring. In addition, they protect individuals’ privacy and the required equipment can be bought at a reasonable price. In fact, most households in developed countries already have WiFi at home, and this technology may be scaled to monitor the well-being of elder people or just identify suspicious behaviors at home,” the authors wrote in their study, which is yet to be formally peer-reviewed and is available on the preprint server ArXiv.

AI turns WiFi routers into cameras that can see through walls

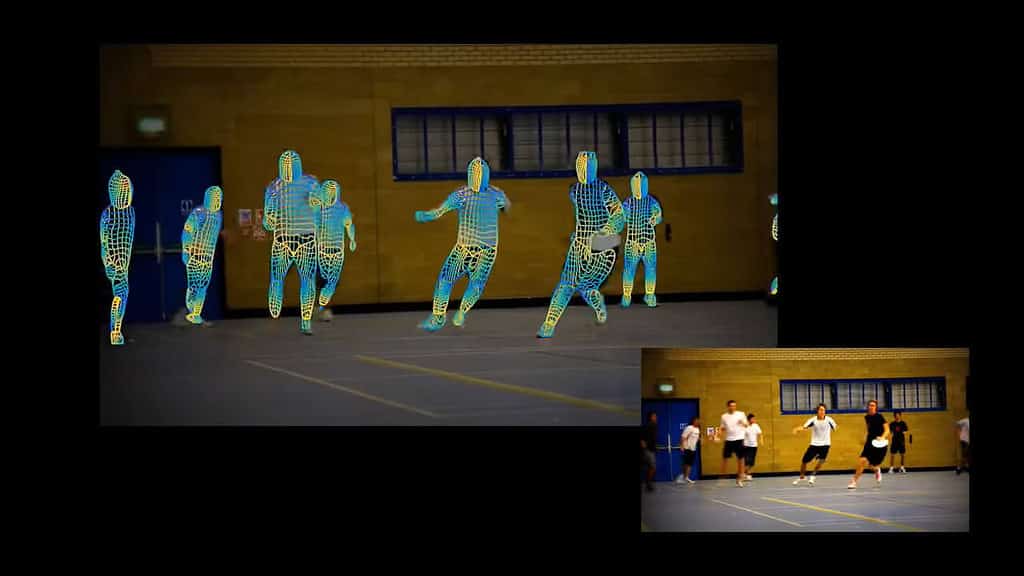

The team used DensePose, a system for mapping all of the pixels on the surface of a human body in a photo, developed by researchers at Facebook’s AI lab and a London-based team. What makes DensePose powerful is its ability to identify over two dozen key points and areas in the human body, such as joints and body parts like the arms, head, and torso. This allows the AI to describe a person’s pose.

Combining all of this data with a deep neural network, they were able to map WiFi signals’ phase and amplitude sent and received by routers to coordinates on human bodies.

For their demonstration, the researchers used three $30 WiFi routers and three aligned receivers that bounce WiFI signals around the walls of a room. The system cancels out static objects and focuses on the signals reflected off moving objects, reconstructing the pose of a person in a radar-like image. This was shown to work even if there was a wall between the routers and the subjects.

This approach could enable standard WiFi routers to see through a variety of opaque obstacles, including drywall, wooden fences, and even concrete walls.

This is not the first time researchers have attempted to “see” people through walls. In 2013, a team at MIT found a way to use cell phone signals for this purpose, and in 2018, another MIT team used WiFi to detect people in another room and translate their movements to stick figures.

However, the new study from the Carnegie Mellon team delivers much higher spatial resolution. You can see what people who are moving are doing by looking at their poses.

Previously, another team at Carnegie Mellon developed a camera system that can ‘see’ sound vibrations with such precision and detail that it can reconstruct the music of a single instrument in a band or orchestra’ without using any microphones.

The researchers believe that WiFi signals “can serve as a ubiquitous substitute” for normal RGB cameras, citing several advantages including the ubiquitous nature of such devices, their low cost, and the fact that using WiFi overcomes obstacles such as poor lighting and occlusion that regular camera lenses face. They add that ‘suspicious behavior’ inside a household can be detected and flagged.

However, the question remains what constitutes “suspicious behaviors” in this context? With companies like Amazon attempting to put camera drones inside our homes, the widespread use of WiFi-enabled human detection raises concerns about the breach of personal privacy.

This technology may prove to be a double-edged sword, and it will be crucial to consider the implications before it hits the mainstream market.

Was this helpful?