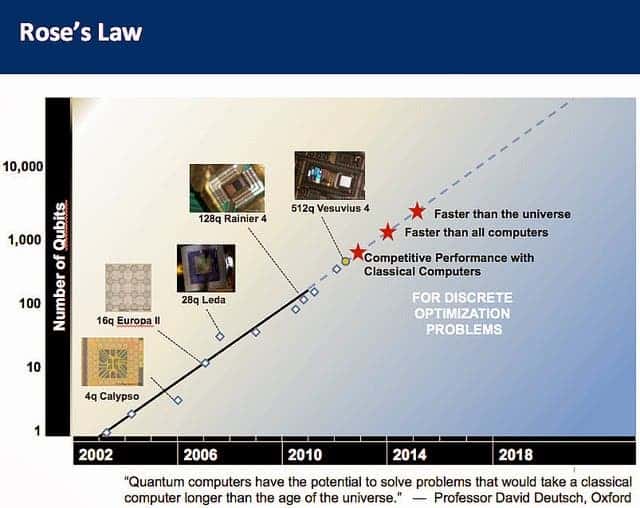

A lot of hype has been going D-Wave’s way in the past decade or so. The company is considered by many the leading quantum computing company in the world, boasting clients such as Lockheed Martin or Google. Before munching up on the hype, though, it’s important to understand that to this day no one has been able to build a practical, working quantum computer. Still, in a recent interview with the Washington Post, D-Wave’s vice president of processor development, Jeremy Hilton, stated the company has a 1000 qubit processor in the lab, which they plan on releasing in 2014.

“An important element of D-Wave’s technology is our roadmap. We’re continuously improving and building bigger processors. Recently we released a 512 qubit processor. If we look at the performance roadmap of that hardware, where performance in solving these problems relative to the state of the art, we saw that between that 128 qubit and 512 qubit, a 300,000x improvement in performance. That kind of performance gain is really unprecedented.

With that 512 qubit processor, it was able to meet and match the state of the art in classical algorithms and computers. It’s very exciting that we had achieved that. So when we look at that we say we continue to make these things bigger and more powerful.

Right now, we have a 1000 qubit processor in our lab. We plan to release it later in 2014. The major thing that’s changing aside from some of the design details is the scale of the problem you can represent, going from a 500-variable graph to a 1000-variable graph. Complexity of that is growing tremendously. [It leads to an] unimaginable exponential blowup of the number of solutions. That scale of problems is getting that much harder for classical algorithms to solve.

[After that] we’re planning to release a 2000-bit processor design. That’s pushing into a scale of territory where we’re tackling problems that are very difficult for people to solve [with conventional methods]. The community is working on getting a few qubits to work at the scale they’re trying to work at.

We’re at a point where we see that our current product is matching the performance of state-of-the-art classical computers. Over the next few years, we should surpass them. The ideal is to get into a space that is fundamentally intractable with classical machines. In the short term all we focus on is showing some scaling advantage and being able to pull away from that classical state of the art. “

A new age of computing or a new age of hype?

It’s hard to define what a quantum computer is in every-day terms and analogies. The gist is that a quantum computer theoretically will exploit quantum mechanics, laws of physics that are not familiar in everyday life but have been familiar to physics for 100 years. More on quantum computing.

D-Wave has not built a general-purpose quantum computer – they never said this themselves, and it’s important not to be fooled into the assumption. Think of it as an application-specific processor, tuned to perform one task — solving discrete optimization problems. This happens to map to many real world applications, from finance to molecular modeling to machine learning, but it is not going to change our current personal computing tasks. In most cases, the quantum computer would be an accelerating coprocessor to a classical compute cluster.

For instance, in the same interview with the WP, Hilton describes their work with Google from 2009 when they built and algorithm that recognizes cars that runs on their 128 qubit processor. According to Hilton, the algorithm was “comparable or slightly better than what they were able to do [with conventional computers]”. Wait wouldn’t a quantum computer be able to do much more than that? Isn’t the whole point that they would be capable, eventually, to outperform any possible classical computer?

That’s because D-Wave didn’t build an universal quantum computer, instead what they’re making are called quantum annealing machines. Quantum annealing is the process by which qubits, the basic unit of information in a quantum computer, are slowly tuned (annealed) from their superposition state (where they are 0 and 1 at the same time) into a classical state (where they are either 0 or 1). D-Wave quantum computers use this process to solve optimization problems in which many criteria need to be considered in order to come up with the best solution.

There isn’t one way to build a quantum computer, and apparently D-Wave is exploring just one model called adiabatic quantum computing. MIT professor Scott Aaronson says he doesn’t buy into the D-Wave hype and while he admits the company is making some interesting contributions, there has been no conclusive evidence so far that their labs are making quantum computers with provable massive factoring machines. D-Wave is known as very secretive, with ties to national security agencies.

Was this helpful?