Prosthetic limbs have gone an incredible long way in recent years. Brain-computer interfaces, couples with incredibly articulated artificial limbs can now allow a disabled individual to move an artificial hand (with up to seven degrees of freedom!) and individual fingers just by thinking about the movement the person wants to the limb to perform. Powerful and incredible science – the kind you might have read in SciFi novels and futurists’ predictions. The future is now, but we still have a long way before these prosthetics become fully clinically viable. For one, prosthetics are missing haptic feedback. You can send an electrical brain signal to your prosthetic’s chip to instruct the prosthetic to perform an action, but you don’t get a signal back that would relay feelings like touch, pressure, contact maneuvers. If you can make these work one way, you can sure bet you can turn this sensory communication two-way as well.

This is exactly what scientists at University of Chicago are attempting. They have yet to establish a viable prosthetic that relays back sensory input in real time, but the experiments they’ve done so far lay down the grow-work for the touch-sensitive-prosthetics of tomorrow.

“To restore sensory motor function of an arm, you not only have to replace the motor signals that the brain sends to the arm to move it around, but you also have to replace the sensory signals that the arm sends back to the brain,” said the study’s senior author, Sliman Bensmaia, PhD, assistant professor in the Department of Organismal Biology and Anatomy at the University of Chicago.

“We think the key is to invoke what we know about how the brain of the intact organism processes sensory information, and then try to reproduce these patterns of neural activity through stimulation of the brain.”

Bensmaia and colleagues made a couple of experiments on monkeys, whose neural-sensory inputs are highly similar to those of humans. The purpose of these works was to identify patterns of neural activity that occur during natural object manipulation and then induce these patterns through artificial means.

The touch of brilliance

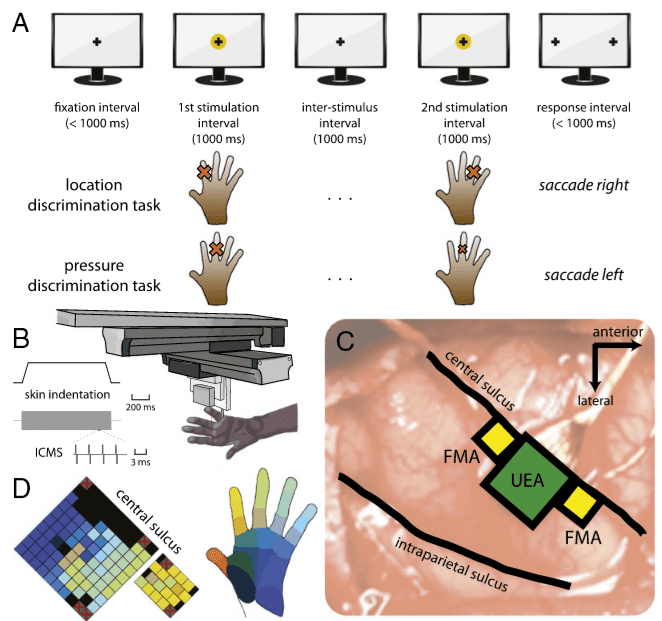

In the first experiment, the researchers focused on analyzing and measuring brain activity during contact – when the skin had been touched. The animals were trained to identify several patterns of physical contact with their fingers. Researchers then connected electrodes to areas of the brain corresponding to each finger and replaced physical touches with electrical stimuli delivered to the appropriate areas of the brain. It was then observed that the animals responded to the artificial stimuli as if it came from a genuine touch.

In a second experiment, the researchers sought to relay back pressure. This time around, the researchers developed an algorithm that fed-back an appropriate amount of electrical current to elicit a sensation of pressure. Again, the animals’ response was the same whether the stimuli were felt through their fingers or through artificial means.

In the third and last experiment, the interdisciplinary team of researchers studied contact events – grabbing, gripping, manipulating objects etc. When the monkey’s hand first touches or releases an object, a specific electrical burst is activated in the brain. After mimicking these bursts artificially, the researchers saw that yet again they could elicit a genuine response for an artificial stimuli.

[NOW READ] A scientific explanation for the phantom limb

With this proof of concept, it’s clear one can develop a precise and coordinated set of instructions via brain-interface that can work in both ways: first instruct the prosthetic to perform a certain action (clench, move a finger in a particular way, grab a coffee mug etc.), second send back and analyze the feed-back signal coming from the prosthetic which the prosthetic wearer can translate into sensations.

“The algorithms to decipher motor signals have come quite a long way, where you can now control arms with seven degrees of freedom. It’s very sophisticated. But I think there’s a strong argument to be made that they will not be clinically viable until the sensory feedback is incorporated,” Bensmaia said. “When it is, the functionality of these limbs will increase substantially.”

The University of Chicago ground-breaking work has been described in a paper published online in the journal Proceedings of the National Academy of Sciences.

Was this helpful?